Microsoft and NVIDIA introduce parameter-efficient multimodal transformers for video representation learning - Microsoft Research

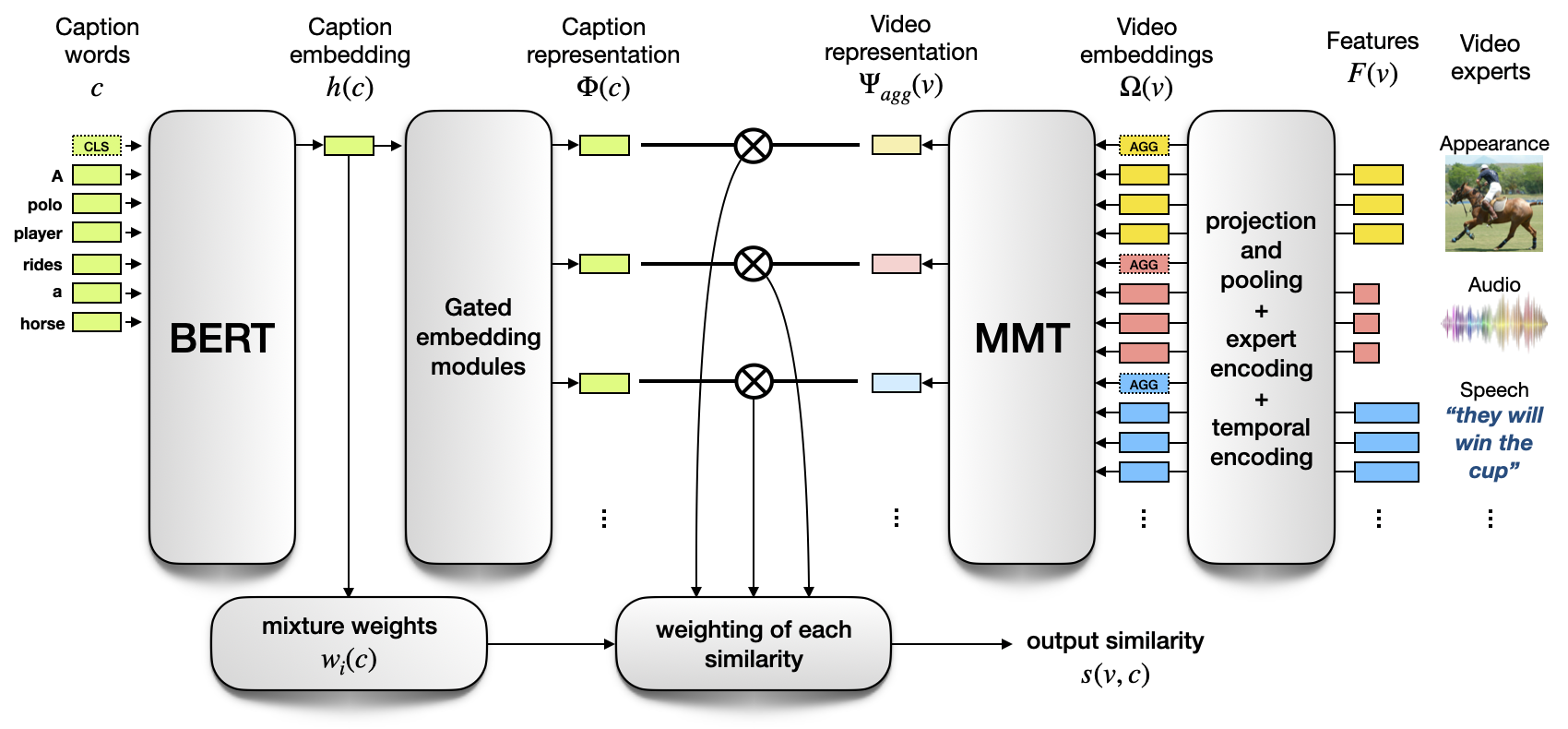

Zeta Alpha on Twitter: "5. "VATT: Transformers for Multimodal Self-Supervised Learning from Raw Video, Audio and Text" by @AkbariH70 Liangzhe Yuan @RuiQian3 Wei-Hong Chuang, Shih-Fu Chang, @YinCui1 @BoqingGo. The promised multimodal future

Microsoft and NVIDIA introduce parameter-efficient multimodal transformers for video representation learning - Microsoft Research

Unimodal and multimodal transformers: Trans-Cond and the Trans-Attn... | Download Scientific Diagram

ASD-TRANSFORMER: EFFICIENT ACTIVE SPEAKER DETECTION USING SELF AND MULTIMODAL TRANSFORMERS Gourav Datta∗†1 Tyler Etchart∗2

Multimodal Co-Attention Transformer for Survival Prediction in Gigapixel Whole Slide Images | Max-Lu

AI Researchers from China Suggest a Robust Foundation for Multimodal Connection Extraction Based on an Implicit Fine-Grained Multimodal Alignment and Transformer - MarkTechPost

Biomolecules | Free Full-Text | GOProFormer: A Multi-Modal Transformer Method for Gene Ontology Protein Function Prediction

![PDF] Unifying Multimodal Transformer for Bi-directional Image and Text Generation | Semantic Scholar PDF] Unifying Multimodal Transformer for Bi-directional Image and Text Generation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/50f6dd2aa07074d2904f153a0e489285499436c1/1-Figure1-1.png)

PDF] Unifying Multimodal Transformer for Bi-directional Image and Text Generation | Semantic Scholar

MultiModal Fusion Transformer (MMFT): We treat input modalities as a... | Download Scientific Diagram

Self‐supervised multimodal fusion transformer for passive activity recognition - Koupai - 2022 - IET Wireless Sensor Systems - Wiley Online Library

GitHub - georgian-io/Multimodal-Toolkit: Multimodal model for text and tabular data with HuggingFace transformers as building block for text data

Multimodal channel-wise attention transformer inspired by multisensory integration mechanisms of the brain - ScienceDirect

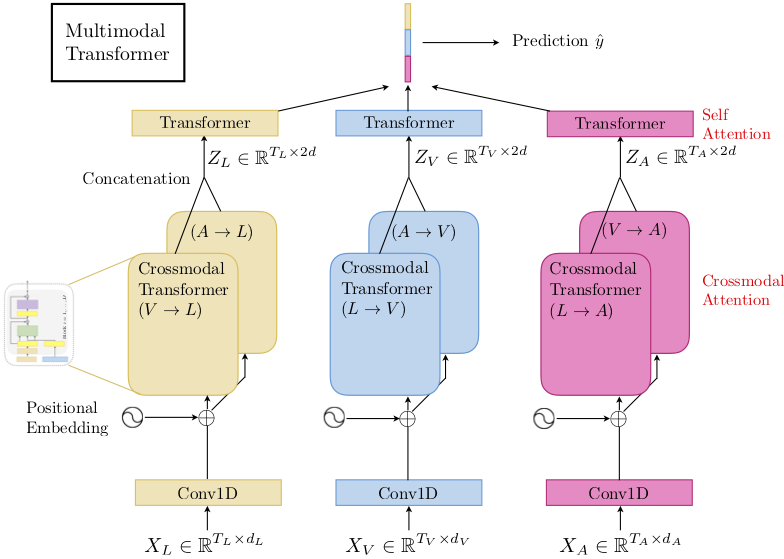

![PDF] Multimodal Transformer for Unaligned Multimodal Language Sequences | Semantic Scholar PDF] Multimodal Transformer for Unaligned Multimodal Language Sequences | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/949fef650da4c41afe6049a183b504b3cc91f4bd/4-Figure3-1.png)

![Paper Review] Multimodal Transformer for Unaligned Multimodal Language Sequences - YouTube Paper Review] Multimodal Transformer for Unaligned Multimodal Language Sequences - YouTube](https://i.ytimg.com/vi/JTbX4OyUF-c/maxresdefault.jpg)